Voice agent

Voice agents at sub-600ms on a single GPU.

Speech, chat, and TTS open models on one L4. Swap in any compatible Hugging Face model ID.

- End-to-end p50

- 540ms

- Throughput lift

- 3.4x

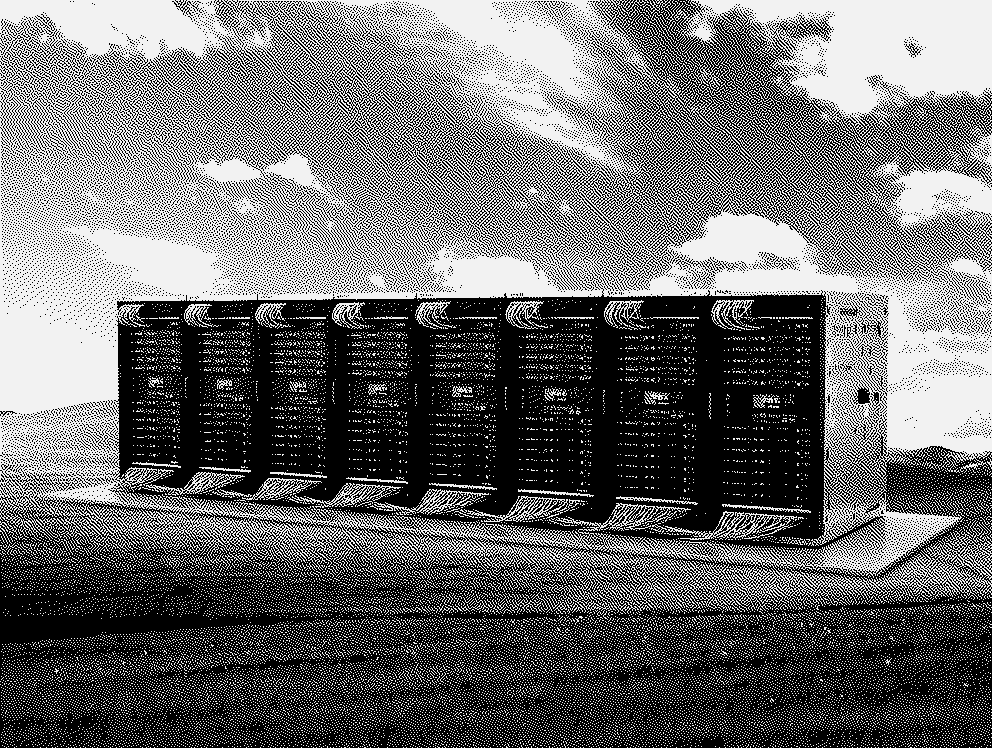

- Starter GPU

- 1 L4

- Accuracy budget

- 0%

Starter values. Retunes per HF model and GPU.

What you actually own

The optimization knobs, the codebase, and the model choice. None of them locked away.

Tune every optimization on your terms.

Kernels, quantization, KV cache, speculation, serving config. The agent runs the full sweep and surfaces every trade-off.

You own the full codebase, end to end.

Export the Dockerfile, serve script, and config. Run on managed RunInfra or your own GPUs. No proprietary runtime.

Any Hugging Face model fits.

Paste any compatible HF model ID. The agent retunes the kernels, quantization, and serving config around it.

What RunInfra tunes for every model

Six engines, every run, retuned per model and GPU.

Kernel sweep

FlashAttention, FlashInfer, Marlin, custom fusion. Correctness + speedup gates on every rewrite.

Quantization

FP8, AWQ, GPTQ, HQQ, INT8 SmoothQuant, NVFP4. Quality scored against your accuracy floor.

KV cache

FP8, INT4, and TurboQuant 3-4 bit KV compression. 60 to 75% VRAM savings.

Speculative decoding

EAGLE3, MTP, n-gram lookup, draft model. 1.3 to 2x decode speedup, weights untouched.

Serving config

Continuous batching, chunked prefill, PagedAttention, prefix cache. Tuned across vLLM, SGLang, TensorRT-LLM.

Multi-cloud capacity

Pareto GPU selection across L4 to B200. Managed or exportable.

Any HF voice model works

Every voice-compatible model on Hugging Face runs through the same recipe. Search the live catalog above. The examples below are just a starting view.

Search the full Hugging Face catalog, then paste a compatible model ID in RunInfra to retune the recipe.

Three ways to ship voice

Most teams choose between speed and control. RunInfra keeps both in one workflow.

| What matters | RunInfraFast path with model control and export. | Closed APIsFast start, locked runtime. | DIY self-hostingFull control, heavy operations. |

|---|---|---|---|

| 01Launch | Pick model, optimize, deploy Start quickly and keep the production path open. | Call provider endpoint Fast first demo, but the runtime stays rented. | Build serving stack first Infrastructure work comes before product learning. |

| 02Model control | Bring the model ID Keep model choice and serving decisions visible. | Provider catalog You use what the provider exposes. | Your model Full control if your team maintains the runtime. |

| 03Tuning | Measured latency and GPU cost Compare serving choices before deployment. | Opaque Latency and batching stay behind the API. | Manual profiling Your team owns tuning and regressions. |

| 04Export | Managed now, export when needed Use the endpoint first and take the deploy package later. | Locked endpoint You keep calling the provider. | Already owned Export exists because you built everything yourself. |

| 05Operations | Low until you choose to own it Operate managed, then export with the same measured plan. | Low, with lock-in Less infra work, less production control. | High You own infra, failures, upgrades, and serving changes. |

RunInfra

Fast path with model control and export.

Launch

Pick model, optimize, deploy

Start quickly and keep the production path open.

Model control

Bring the model ID

Keep model choice and serving decisions visible.

Tuning

Measured latency and GPU cost

Compare serving choices before deployment.

Export

Managed now, export when needed

Use the endpoint first and take the deploy package later.

Operations

Low until you choose to own it

Operate managed, then export with the same measured plan.

Try this pipeline

Edit the model, engine, or GPU inline. Send to retune the stack in the dashboard.

Questions founders usually ask

Need a custom configuration? Talk to our team.

Does this really fit on a single L4?

Yes. Whisper Large V3 in streaming mode uses about 3 GB VRAM. Llama 3.2 3B Instruct in AWQ-int4 uses about 2.5 GB. Chatterbox vocoder is about 2 GB. The L4's 24 GB has plenty of headroom for KV cache and concurrent calls. For higher concurrency move to L40S (48 GB).

Own your AI. We benchmark GPUs, optimize kernels, and deploy open-source models as production APIs.

Start building